Aktu’s Quantum Notes can help you unlock the secrets to B.Tech success. These succinct yet thorough notes concentrate on the most important and often asked questions in analogue and digital communication. Today is the day to ace your tests! Unit-5 Time Division Multiplexing

Dudes 🤔.. You want more useful details regarding this subject. Please keep in mind this as well. Important Questions For Analog and Digital Communication: *Quantum *B.tech-Syllabus *Circulars *B.tech AKTU RESULT * Btech 3rd Year * Aktu Solved Question Paper

Q1. Define and draw the TDM system.

Ans. 1. Time division multiplexing (TDM) enables the joint utilization of a common communication channel by a plurality of independent message sources without mutual interference among them.

- 2 The concept of TDM is shown in Fig. Each input message signal is first restricted in bandwidth by a low-pass anti-aliasing filter to remove the frequençies that are non-essential to an adequate signal representation.

- 3. Commutator is usually implemented using electronic switching circuitry. Mainly two functions are done by commutator :

- a. To take a narrow sample of each of the N input messages at a rate fs that is slightly higher than 2W, where W is the cutoff frequency of the anti-aliasing filter.

- b. To sequentially interleave these N samples inside the sampling interval Ts.

- 4. The multiplexed signal is applied to a pulse modulator after passing via a commutator in order to be converted into a format that can be transmitted over the common channel.

- 5. The received signal is applied to a pulse demodulator at the system’s receiving end, which executes the opposite function of the pulse modulator.

- 6. The decommutator, which runs in sync with the transmitter’s commutator, distributes the narrow samples generated at the pulse demodulator output to the necessary low pass reconstruction filters. The successful execution of this synchronization is crucial.

- 7. The TDM system is extremely vulnerable to dispersion in the common channel, such as fluctuations in amplitude with frequency or a lack of proportionality in phase with frequency.

- 8. To ensure that the system operates satisfactorily, the channel’s magnitude and phase responses must be accurately equalized.

Q2. Explain bit interleaving.

Ans. 1. Bit-by-bit interleaving, employing a selector switch to sequentially take a bit from each incoming line and apply it to the high-speed common line, is how digital signals are multiplexed.

2. The output of this shared line is divided into its low-speed individual components at the system’s receiving end before being sent to its intended destinations.

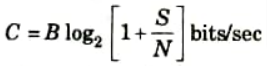

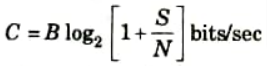

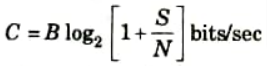

Q3. Explain Shannon-Hartley theorem.

Ans. 1. The Shannon Hartley theorem is a companion theorem to Shannon’s theorem that pertains to channels with gaussian noise.

2. The channel capacity of a white, bandlimited gaussian channel is

where, B is the channel bandwidth, Sis the signal power, and Nis total noise within the channel bandwidth ie., N= ηß with η/2, the power spectral density.

3. It is crucial to understand this theorem. First, gaussian channels are typically encountered in physical systems. A lower constraint on the performance of a system running over a non-gaussian channel is frequently provided by the results found for a gaussian channel.

Q4. Write a short note on Shannon-Fano coding.

Ans. A. Shannon-Fano coding: This coding method is directed towards constructing reasonably efficient separable binary codes. If [X] be the ensemble of the messages to be transmitted, with the corresponding probability of [P]. The sequence ck of binary numbers of the length nk associated to each message xk should fulfill the following conditions:

- 1. No sequences of used binary numbers ck can be obtained from each other by adding more binary digits to the shorted sequence.

- 2. Transmission of message is very efficient i.e., 0 and 1 appear independently, with almost equal probability.

B. Procedure of Shannon-Fano coding:

An efficient code can be obtained by simple procedure, known as Shannon-Fano coding.

- 1. Arrange the source symbols in decreasing probability order.

- 2. Divide the sets into two sets that are as nearly equal in size as possible, and give the upper set the value 0 and the bottom set the value 1.

- 3. Keep going through this process, splitting the sets as nearly equally as you can each time until no more partitioning is possible.

Q5. What is information ? How information is measured ?

Ans. A. Information: “The information in a message can be interpreted as the minimum number of binary digits required to encode the message.

B. Measurement of information:

- 1. The information has a surprise component to it, which results from unpredictability or unexpectedness. The bigger the surprise and hence the more information, the more surprising the event.

- 2. The likelihood that an event will occur is a measurement of its unexpectedness and, as a result, is correlated with the information content. So, the amount of information obtained from a message is either inversely proportional to the likelihood of its occurrence or directly connected to the uncertainty.

- 3. If I is the knowledge received by the message and P is the likelihood that the message will occur.

- 4. Consider the case of the four equiprobable message m1, m2, m3, and m4. If these messages are encoded in binary form, we need a minimum of two binary digits per message. Each binary digit can assume two values.

- 5. Hence, a combination of two binary digits can form the four codewords 00, 01, 10, 11, which can be assigned to the four equiprobable message m1, m2, m3, and m4 respectively.

- 6. It is clear that each of these four messages takes twice as much transmission time as that required by each of the two equiprobable messages and hence, contains twice as much information.

- 7. Similarly we can encode any one of eight equiprobable messages with a minimum of three binary digits. This is because three binary digits form eight distinct codewords, which can be assigned to each of the eight messages.

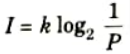

- 8. It can be seen that in general we need log2 n binary digits to encode each of n equiprobable message. Because all the message are equiprobable (with probability P), we need log2 (1/P) binary digits.

- 9. Thus, the information contained in a message with probability of occurrence P is proportional to log2 (1/P) where k is a constant to be determined.

Q6. Explain information rate, channel capacity. Explain Huffman coding in brief.

Ans. A. Huffman coding:

Huffman encoding results in an optimum code. It is the code that has the highest efficiency. The Huffman encoding procedure is as follows:

- 1. Arrange the source symbols in decreasing probability order.

- 2. Reorder the probabilities that arise from combining the probability of the two symbols with the lowest probabilities; this process is known as reduction. 1. The process is continued until only two sorted probabilities are left.

- 3. With the final reduction, which consists of precisely two ordered probabilities, begin encoding. For all the source symbols connected to the first probability, put 0 as the first digit in the code words; for the second probability, put 1.

- 4. With all the assignments from step 3, go back and assign 0 and 1 to the second digit for the two probabilities that were combined in the previous reduction phase.

- 5. Keep regressing the way until the first column is reached.

B. Information rate: If the same source of the message generates messages at the rate r message per second, then the information rate is defined as:

R = rH = average number of bits of information / second

C. Channel capacity: It is defined as the intrinsic ability of a channel to convey information.

Important Question with solutions | AKTU Quantums | Syllabus | Short Questions

Analog and Digital Communication Btech Quantum PDF, Syllabus, Important Questions

| Label | Link |

|---|---|

| Subject Syllabus | Syllabus |

| Short Questions | Short-question |

| Question paper – 2021-22 | 2021-22 |

Analog and Digital Communication Quantum PDF | AKTU Quantum PDF:

| Quantum Series | Links |

| Quantum -2022-23 | 2022-23 |

AKTU Important Links | Btech Syllabus

| Link Name | Links |

|---|---|

| Btech AKTU Circulars | Links |

| Btech AKTU Syllabus | Links |

| Btech AKTU Student Dashboard | Student Dashboard |

| AKTU RESULT (One VIew) | Student Result |